Transforming psychological assessment workflows

psynth

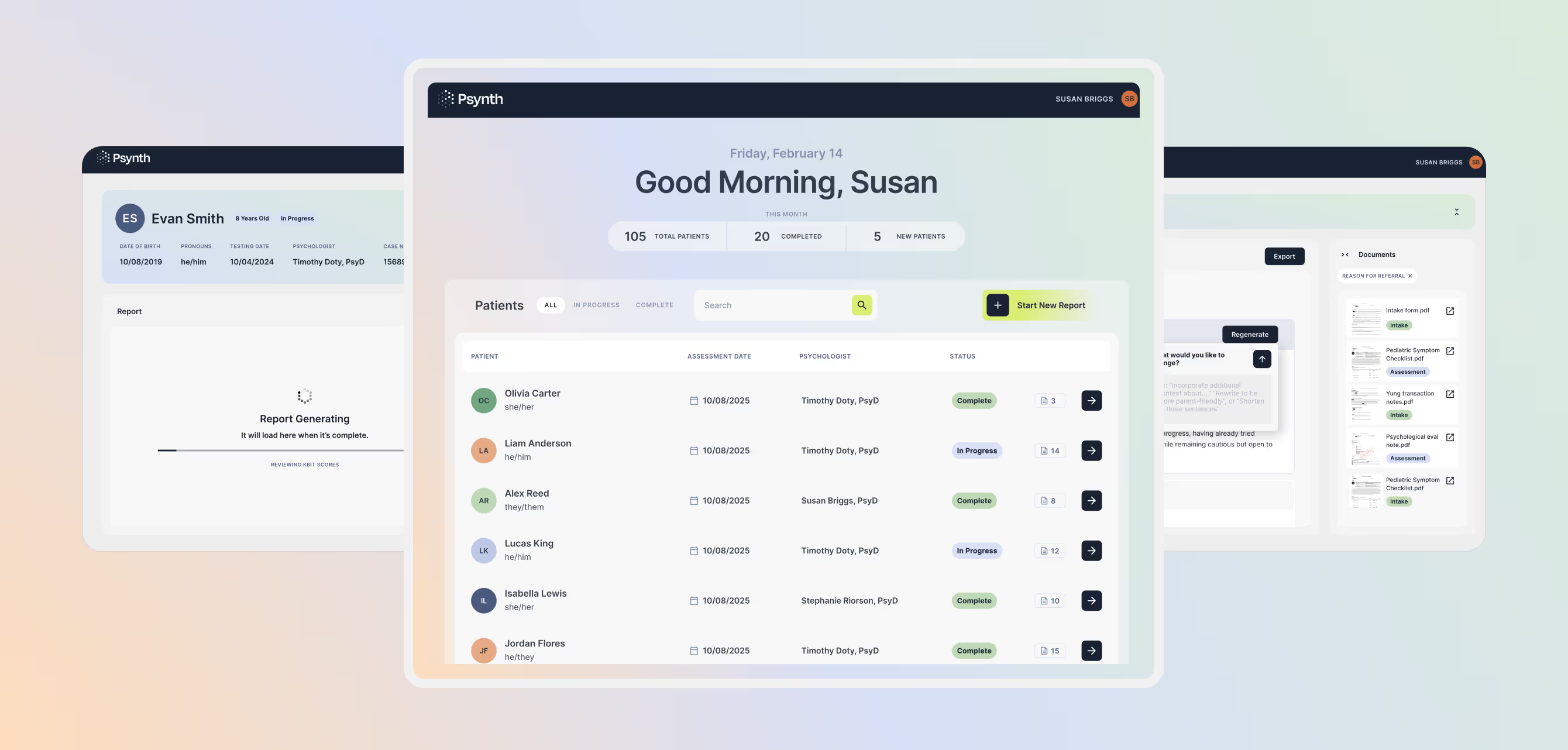

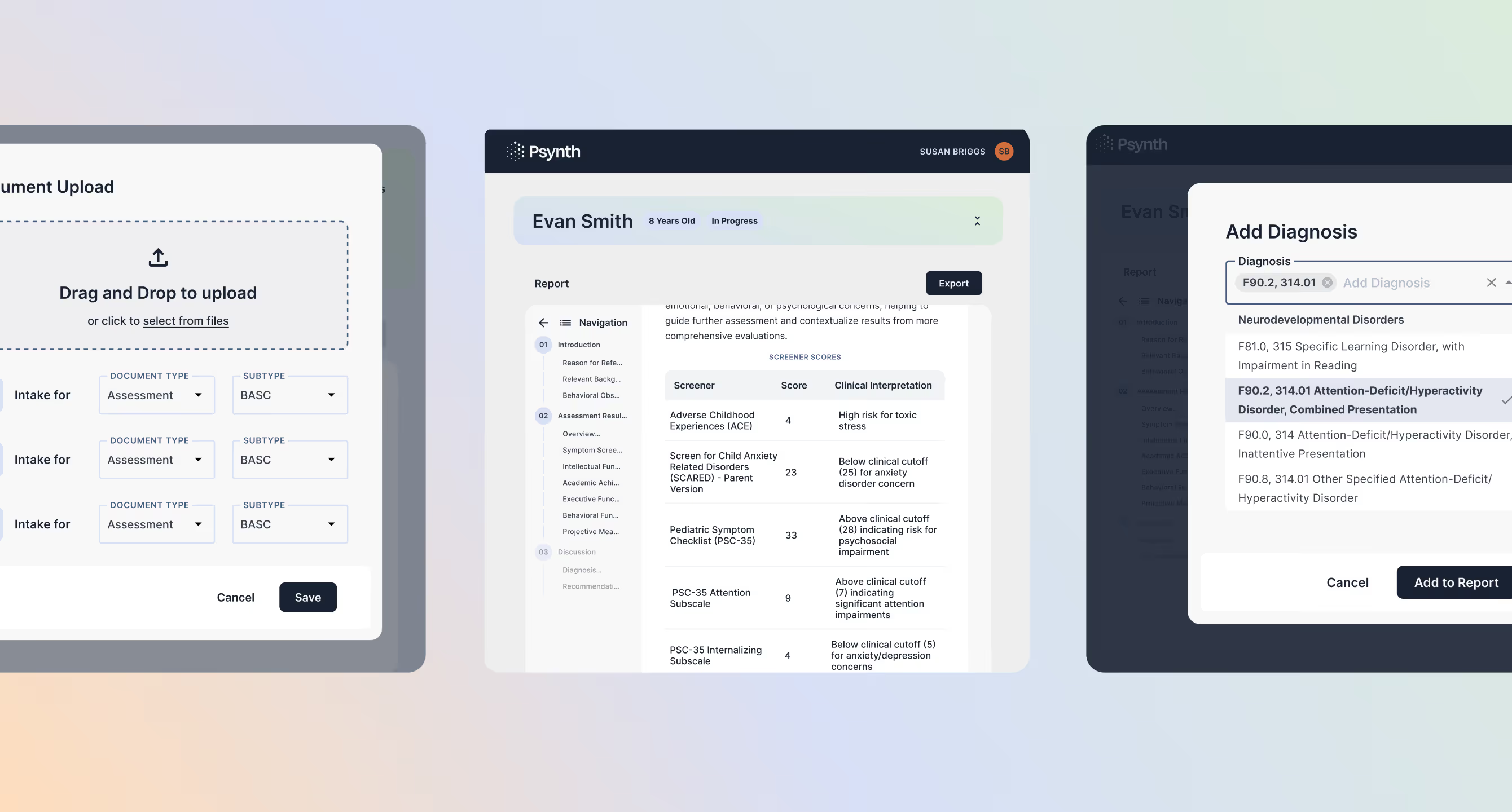

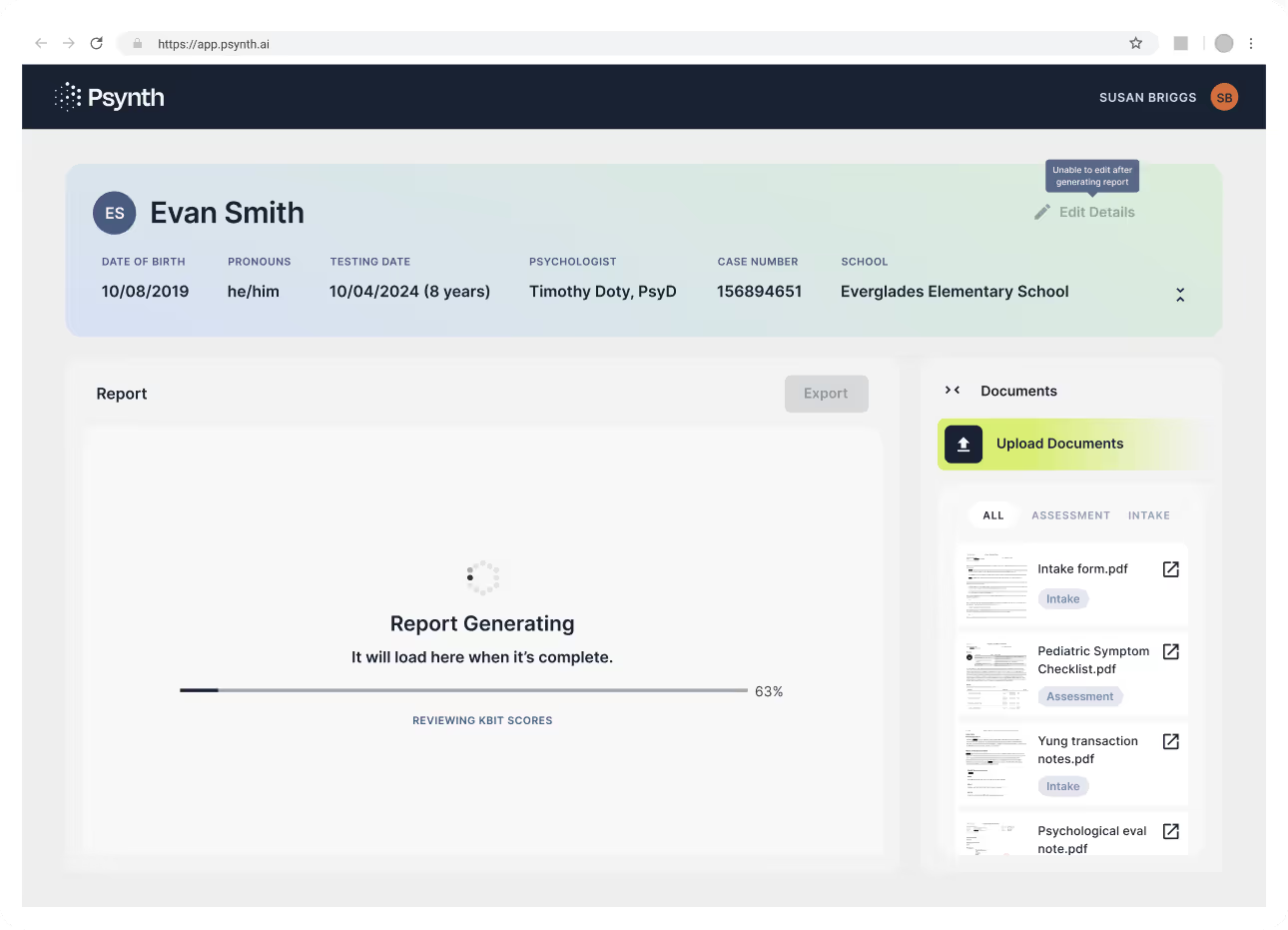

Psynth is an AI-powered psychological assessment platform that automates clinical report generation by synthesizing information from multiple intake and assessment documents. Psychologists upload patient files, the system intelligently labels content by report section, and generates comprehensive clinical reports. This reduces a process that once took days to just minutes while minimizing human error.

The core challenge was streamlining a traditionally manual, error-prone workflow where psychologists must extract, organize, and format information from many documents into standardized reports. This process involves significant document overload, repetitive formatting, and high risk of missed or misattributed details.

Our goal was to design a system that automated synthesis without sacrificing clinical oversight: creating a clear, efficient workflow from patient creation to final report, while making it transparent which source documents informed each section so psychologists could easily verify accuracy.

Key Design Decisions

Decision 1: Unified Patient and Report Workspace

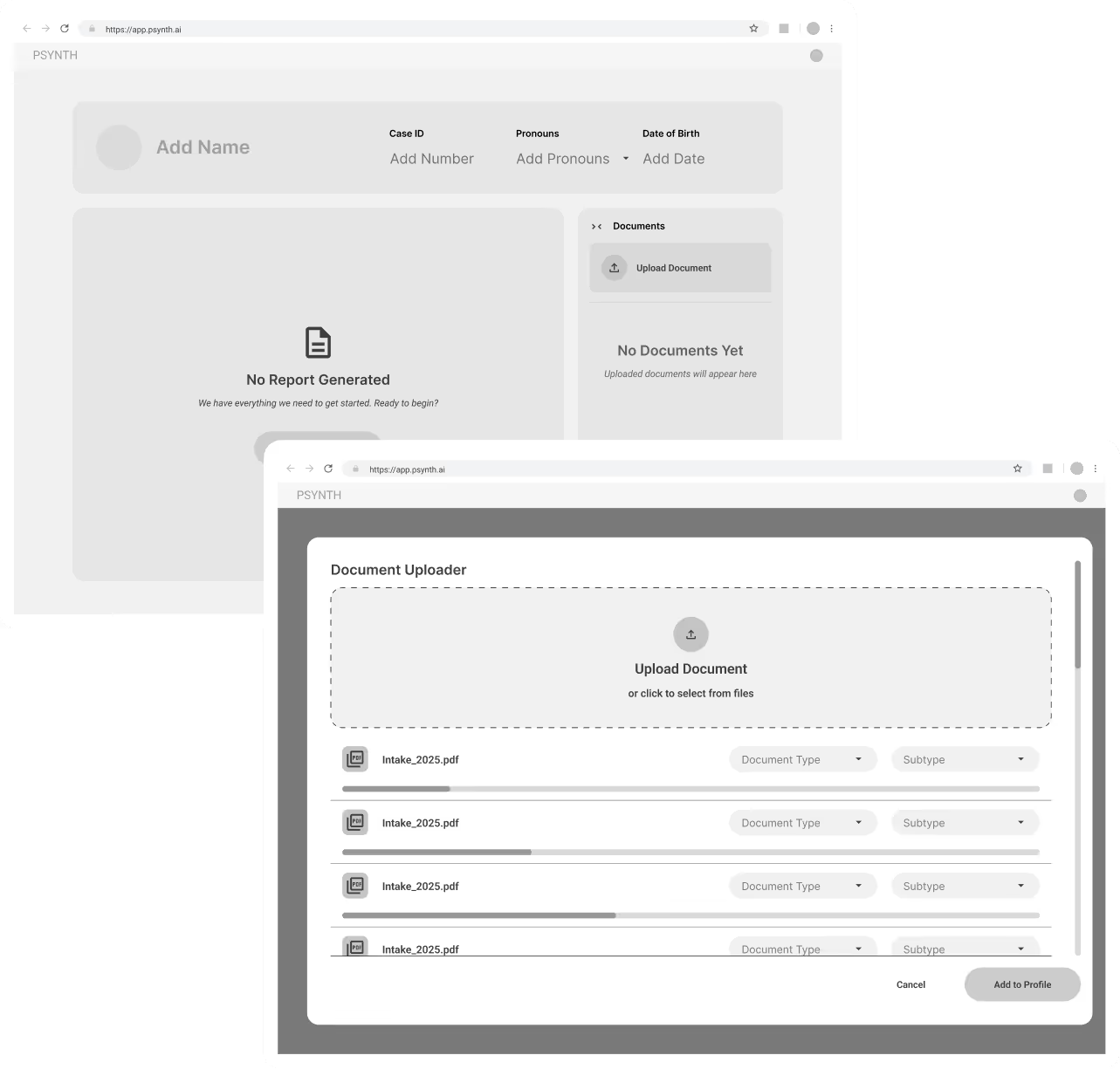

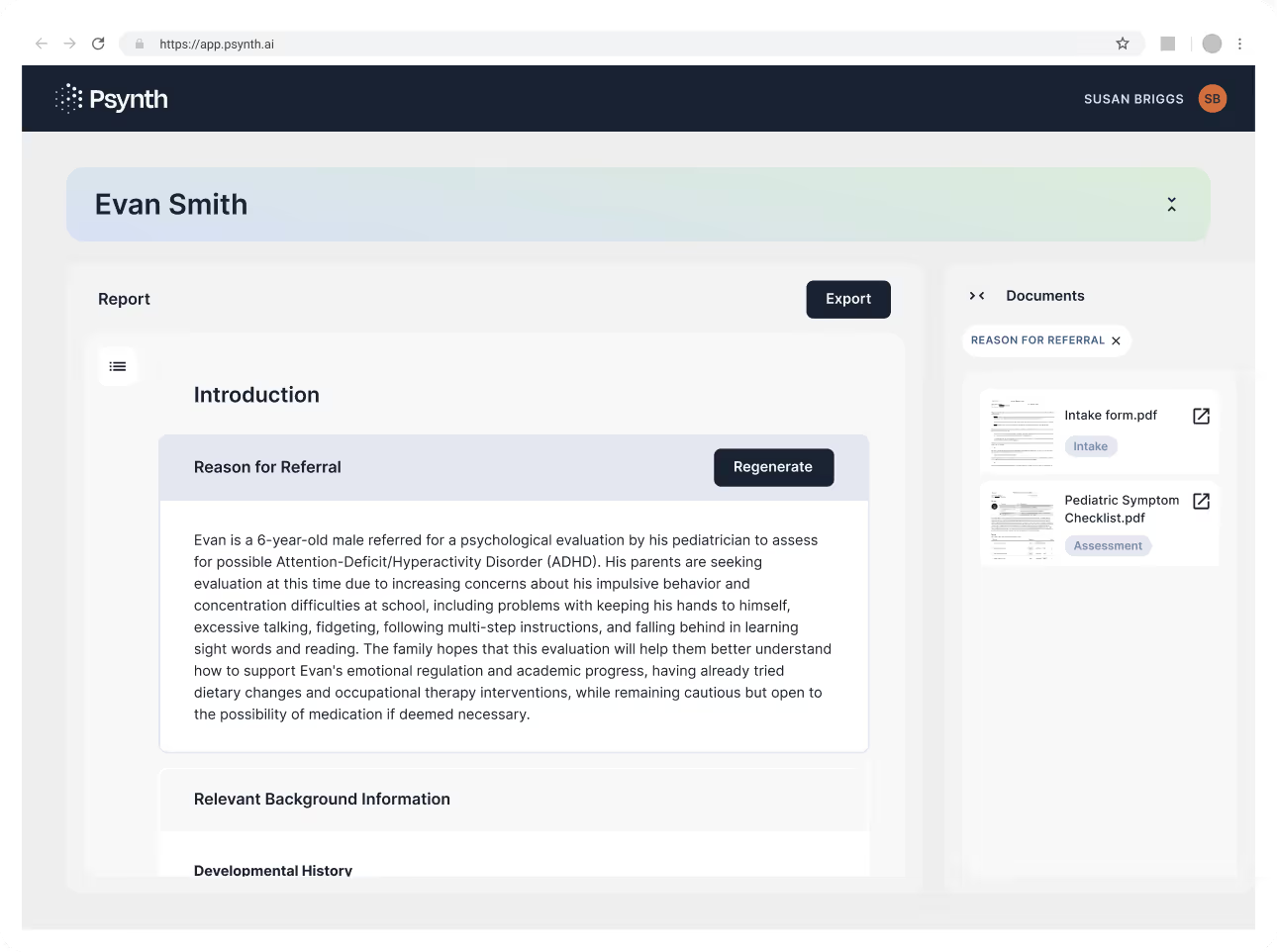

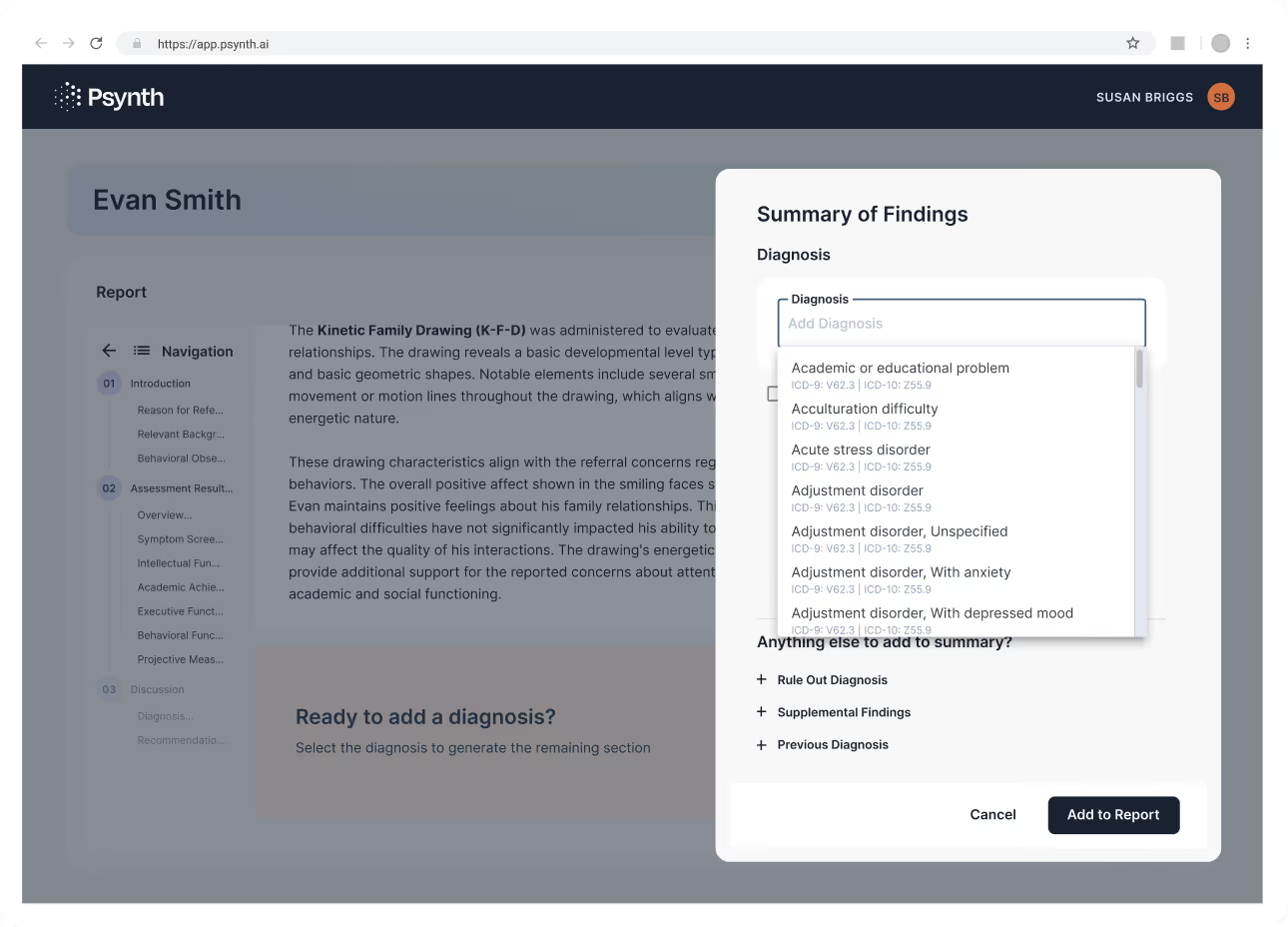

Rather than separating patient details and report creation into different views, I combined the patient profile and report workspace into a single cohesive space. This ensured all relevant context, including patient information, uploaded documents, and report content, was visible in one place. By eliminating view switching, psychologists could stay focused, work more efficiently, and maintain confidence that they were editing the report with full patient context at all times.

Decision 2: Inline Source Attribution

Psychologists needed to trust the AI-generated content without re-reading every source document. I designed inline source attribution that showed which documents informed each report section, with one-click access to the specific excerpts used. This transparency allowed psychologists to verify accuracy by quickly spot-checking key sections rather than reviewing everything, significantly reducing verification time while maintaining clinical standards.

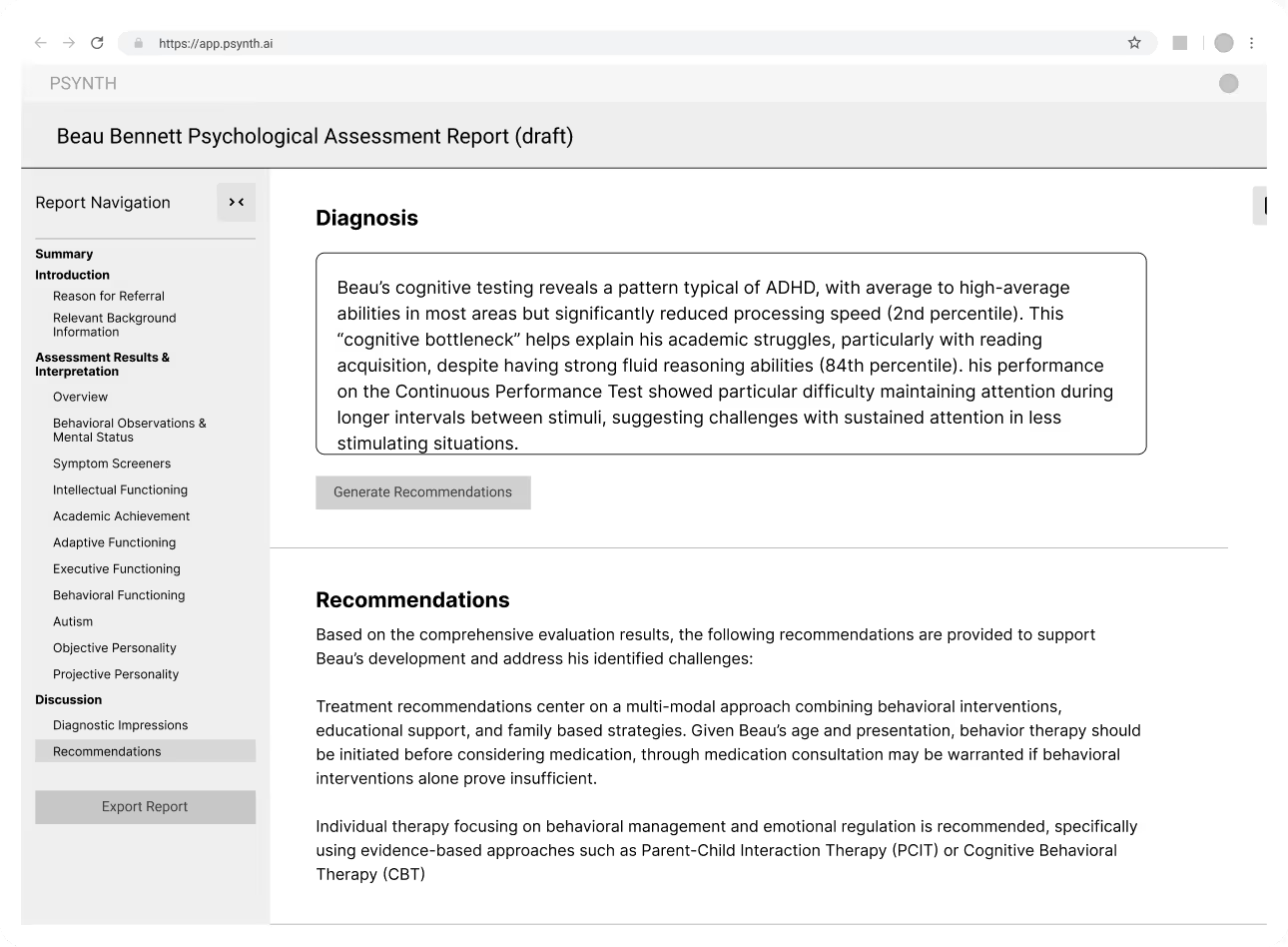

Decision 3: Sectioned Editing Instead of a Document Editor

Instead of mimicking a traditional document editor, I intentionally sectioned the report into clearly labeled, discrete parts that matched clinical report structure. Each section could be reviewed and edited independently, making it obvious what content belonged where and reducing cognitive load. This approach helped psychologists move through the report methodically, ensuring nothing was overlooked and reinforcing a sense of clarity and control during review.

Research and Discovery

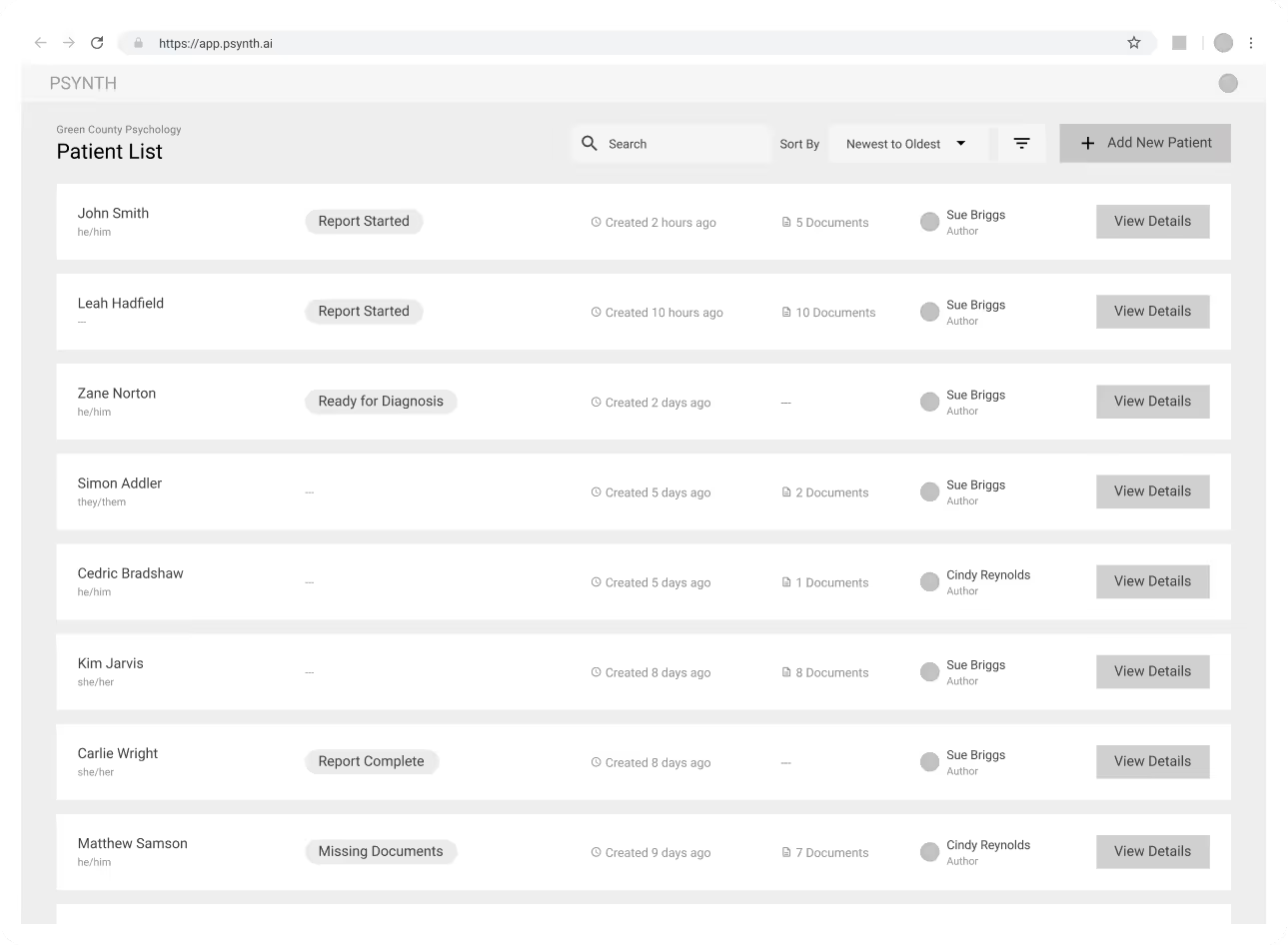

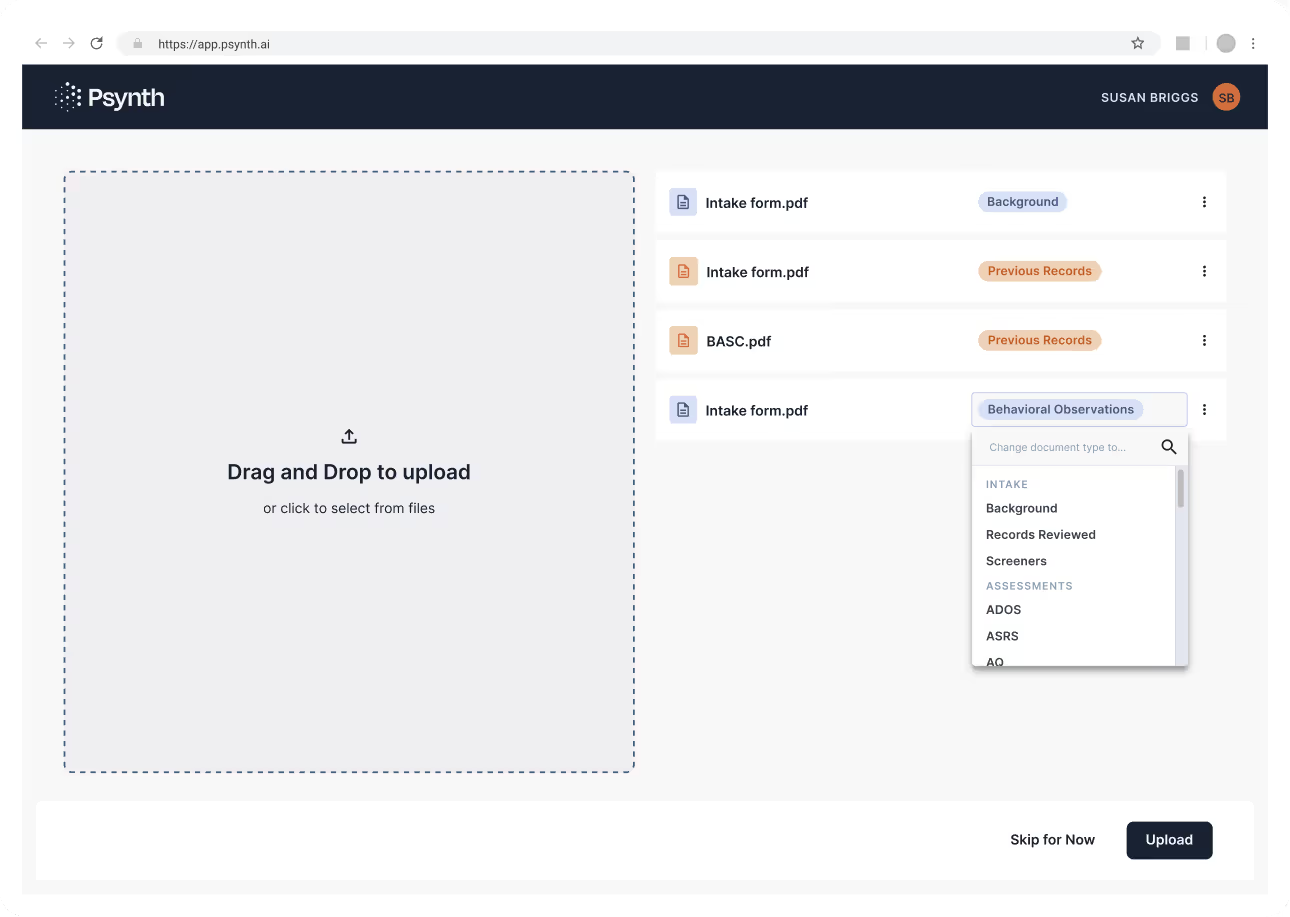

We conducted stakeholder research with clinical psychologists, psychiatrists, and healthcare administrators, and I partnered with the research team to synthesize findings into clear UX priorities. We learned that reviewing and extracting information from 5–10+ documents per patient was the most time-consuming and error-prone part of report writing, particularly ensuring content landed in the correct report sections.

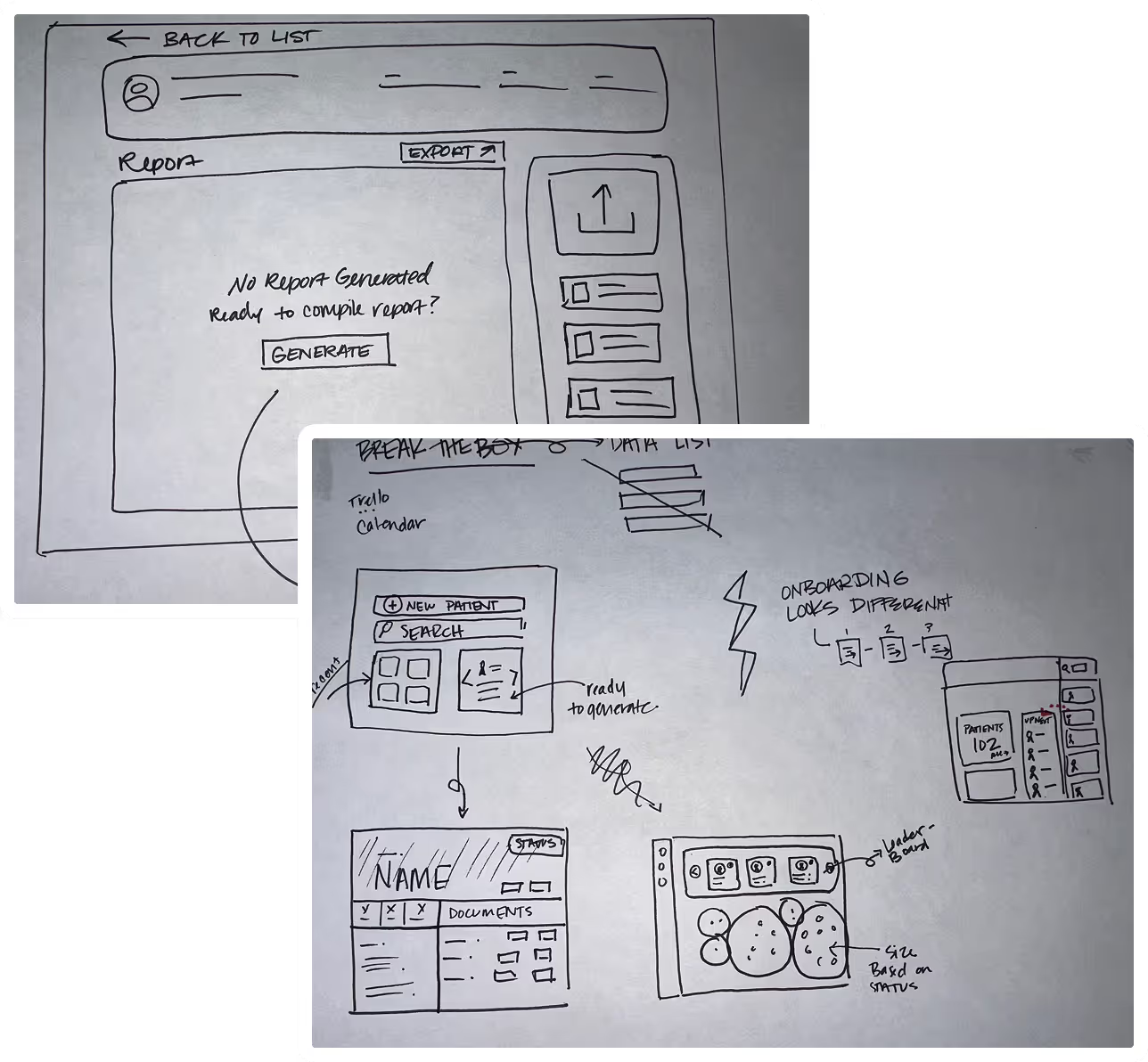

Design & Iteration

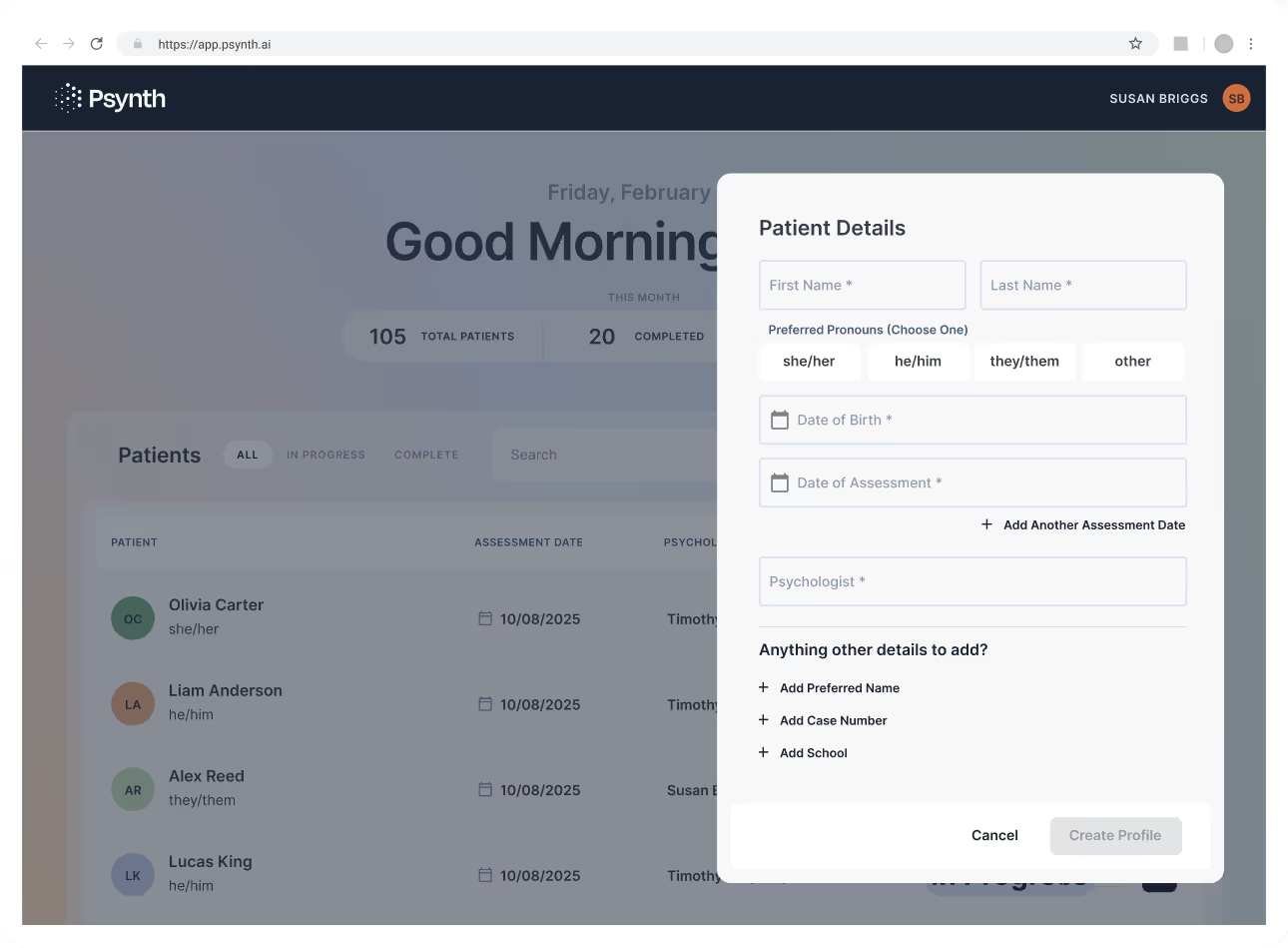

The core design challenge was creating a clear, linear workflow that guided psychologists from patient creation to a finalized report without friction. I designed a step-by-step flow that moved from adding patient details, bulk uploading documents, reviewing AI-generated labels, and generating the report with a single action, all supported by clear progress indicators and obvious next steps.

Outcomes/Impact

Psynth has transformed psychological report generation for a growing base of early users, reducing what was once a multi-day process to minutes and significantly cutting rework. Feedback consistently validates the core value: psychologists save substantial time while maintaining confidence in accuracy. With an active and expanding early user group, we’re able to rapidly iterate on the product, continuously refining the AI labeling and report templates based on real-world usage and direct user feedback.